bigdata - Graph Database

Why graphs

Now, we do know a way to avoid joins and studied it at length: denormalizing the data to (homogeneous) collections of trees is a way of “pre-computing” the joins statically, so that the data is already joined (via nesting) at runtime.

Now, why is it efficient? Because doing down a tree only necessitates following pointers in memory. But trees cannot have cycles. Graph databases provide a way of generalizing the use in-memory pointers to traverse data to the general case in which cycles are present: this is called “index-free adjacency.”

Kinds of graph databases

There are many different graph database system products on the market, and they can be classified along several dimensions: Labeled property graph model vs. triple stores;Read-intensive vs. write-intensive;Local vs. distributed;Native vs. non-native.

Graph data models

Labeled property graphs

Computer scientists need to go one step further and also design how to store graphs physically. One way of doing so is to create an associative array mapping each node to the list of nodes that it connects to via an edge (adjacency lists). Another storage form is with an adjacency matrix: each row and each column represent a node, and a 0 or a 1 indicate the absence or presence of an edge between the row node and the column node.

Now, this does not quite work for us, because labeled property graphs enhance mathematical graphs with extra ingredients: properties, and labels.

how to “convert” a relational table to a labeled property graph: labels can be seen as table names, nodes as records, and properties as the attribute values for the records. This shows that relational tables can be physically stored as labeled property graphs. Of course, this does not work the other way round: given a graph, it will often not be possible to convert it “back” to a table in this specific way.

Triple stores

Triple stores are a different and simpler model. It views the graph as nodes and edges that all have labels, but without any properties. The graph is then represented as a list of edges, where each edge is a triple with the label of the origin node (called the subject), the label of the edge (called the property), and the label of the destination node (called the object).

Triple stores typically provide SPARQL capabilities to reason about and stored RDF data.

Querying graph data

We will now have a look at query languages for querying graphs, with a focus on Cypher, which is neo4j’s query language.

Cypher Philosophy

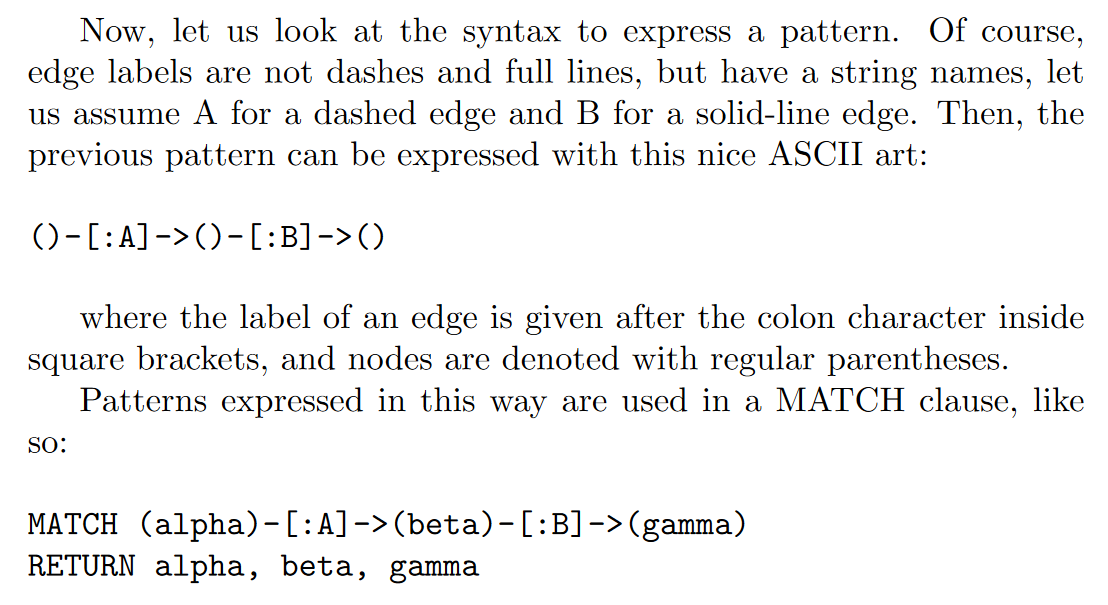

Cypher enables a user (or an application acting on behalf of a user) to ask the database to find data that matches a specific pattern. Colloquially, we ask the database to “find things like this.” And the way we describe what “things like this” look like is to draw them, using ASCII art.

Like most query languages, Cypher is composed of clauses. The simplest queries consist of a MATCH clause followed by a RETURN clause. There are other clauses we can use in a Cypher query: WHERE,WITH…AS…,CREATE,MERGE,DELETE,SET,UNION,FORWACH and so on.

A Comparison of Relational and Graph Modeling

Relational databases—with their rigid schemas and complex modeling characteristics—are not an especially good tool for supporting rapid change. What we need is a model that is closely aligned with the domain, but that doesn’t sacrifice performance, and that supports evolution while maintaining the integrity of the data as it undergoes rapid change and growth. That model is the graph model.

creating a graph

In practice, we tend to use CREATE when we’re adding to the graph and don’t mind duplication, and MERGE when duplication is not permitted by the domain.

Beginning a Query

In Cypher we always begin our queries from one or more well-known starting points in the graph—what are called bound nodes. Cypher uses any labels and property predicates supplied in the MATCH and WHERE clauses, together with metadata supplied by indexes and constraints, to find the starting points that anchor our graph patterns.

INDEXES AND CONSTRAINTS

To support efficient node lookup, Cypher allows us to create indexes per label and property combinations. For unique property values we can also specify constraints that assure uniqueness.

Neo4j

Neo4j is a graph database with native processing capabilities as well as native graph storage.

Native Graph Processing

Of the many different engine architectures, we say that a graph database has native processing capabilities if it exhibits a property called index-free adjacency.

A database engine that utilizes index-free adjacency is one in which each node maintains direct references to its adjacent nodes. Each node, therefore, acts as a micro-index of other nearby nodes, which is much cheaper than using global indexes. It means that query times are independent of the total size of the graph, and are instead simply proportional to the amount of the graph searched. A nonnative graph database engine, in contrast, uses (global) indexes to link nodes together.

Proponents for native graph processing argue that index-free adjacency is crucial for fast, efficient graph traversals. To understand why native graph processing is so much more efficient than graphs based on heavy indexing, consider the following. Depending on the implementation, index lookups could be O(log n) in algorithmic complexity versus O(1) for looking up immediate relationships. To traverse a network of m steps, the cost of the indexed approach, at O(m log n), dwarfs the cost of O(m) for an implementation that uses index-free adjacency.

Native Graph Storage

Neo4j stores graph data in a number of different store files. Each store file contains the data for a specific part of the graph (e.g., there are separate stores for nodes, relationships, labels, and properties).The division of storage responsibilities—particularly the separation of graph structure from property data—facilitates performant graph traversals, even though it means the user’s view of their graph and the actual records on disk are structurally dissimilar.

Like most of the Neo4j store files, the node store is a fixed-size record store, where each record is nine bytes in length. Fixed-size records enable fast lookups for nodes in the store file. If we have a node with id 100, then we know its record begins 900 bytes into the file. Based on this format, the database can directly compute a record’s location, at cost O(1), rather than performing a search, which would be cost O(log n).

Transactions

Transactions in Neo4j are semantically identical to traditional database transactions. Writes occur within a transaction context, with write locks being taken for consistency purposes on any nodes and relationships involved in the transaction. On successful completion of the transaction, changes are flushed to disk for durability, and the write locks released. These actions maintain the atomicity guarantees of the transaction.

CORE API, TRAVERSAL FRAMEWORK, OR CYPHER?

The Core API allows developers to fine-tune their queries so that they exhibit high affinity with the underlying graph. A well-written Core API query is often faster than any other approach. The downside is that such queries can be verbose, requiring considerable developer effort. Moreover, their high affinity with the underlying graph makes them tightly coupled to its structure. When the graph structure changes, they can often break. Cypher can be more tolerant of structural changes—things such as variable-length paths help mitigate variation and change.

The Traversal Framework is both more loosely coupled than the Core API (because it allows the developer to declare informational goals), and less verbose, and as a result a query written using the Traversal Framework typically requires less developer effort than the equivalent written using the Core API. Because it is a general-purpose framework, however, the Traversal Framework tends to perform marginally less well than a well-written Core API query.

exercise

Neo4j system design is different from mongodb on the consistency. MongoDB is eventually consistent, but neo4j is strong consistency and obey the ACID rules.

Cypher: note that label and property are case sensitive but the clause is not case sensitive.

RDF: Turtle Syntax

Mannual grouping and grouping sets: need to know how to translate between them.

grouping with rollup: rollup(c1,c2,c3) is equal to grouping sets((c1,c2,c3),(c1,c2),(c1),()). The ordering is important.

difference between neo4j and RDF:

Data Model:

Neo4j: Neo4j is a graph database that uses the property graph data model. In this model, nodes represent entities, relationships represent connections between entities, and both nodes and relationships can have key-value pairs as properties.

RDF: RDF is a data model for representing knowledge in the form of subject-predicate-object triples. Each triple represents a statement, and these triples can be used to build a graph of linked data. RDF is more abstract and can be implemented using various storage formats.

Query Language:

Neo4j: Neo4j uses the Cypher query language, which is specifically designed for querying graph databases. Cypher allows users to express graph patterns and relationships in a concise and readable manner.

RDF: RDF data is typically queried using SPARQL (SPARQL Protocol and RDF Query Language). SPARQL is a query language for querying and manipulating RDF data, and it provides powerful capabilities for navigating the graph structure.

Graph Structure:

Neo4j: In Neo4j, the graph is explicit, with nodes, relationships, and properties forming a connected graph structure. The focus is on relationships between entities and their properties.

RDF: RDF represents a graph, but the graph structure is more implicit. Resources are identified by URIs, and relationships are expressed through triples, allowing for the creation of a distributed and linked data web.

Schema:

Neo4j: Neo4j supports a flexible schema where nodes and relationships can have dynamic properties. While it provides some level of schema flexibility, users can define constraints and indexes to enforce certain structures.

RDF: RDF is schema-agnostic, allowing for more dynamic and extensible data representation. Schemas can be defined using RDF vocabularies and ontologies, such as RDFS and OWL.

Use Cases:

Neo4j: Neo4j is often used for applications where relationships and graph structures are central, such as social networks, recommendation engines, and network analysis.

RDF: RDF is commonly used in the context of the Semantic Web for representing and linking diverse data sources, allowing for interoperability and knowledge representation.

references

Robinson, I. et al. (2015). Graph Databases (2nd ed.)

bigdata - Graph Database

install_url to use ShareThis. Please set it in _config.yml.